Deploy lakeFS on AWS

Expected deployment time: 25 min

Prerequisites

Users that require S3 access using virtual host addressing should configure an S3 Gateway domain.

Preparing the Database for the Key Value Store

lakeFS uses a key-value store to synchronize actions on your repositories. Out of the box, this key-value store can rely on DynamoDB or a PostgreSQL DB. As lakeFS open source, you can also write your own implementation and use any other DB The following two sections explain how to setup either PostgreSQL or DynamoDB as key-value backing DB

Creating PostgreSQL Database on AWS RDS

We will show you how to create a database on AWS RDS but you can use any PostgreSQL database as long as it’s accessible by your lakeFS installation.

If you already have a database, take note of the connection string and skip to the next step

- Follow the official AWS documentation on how to create a PostgreSQL instance and connect to it. You may use the default PostgreSQL engine, or Aurora PostgreSQL. Make sure that you’re using PostgreSQL version >= 11.

-

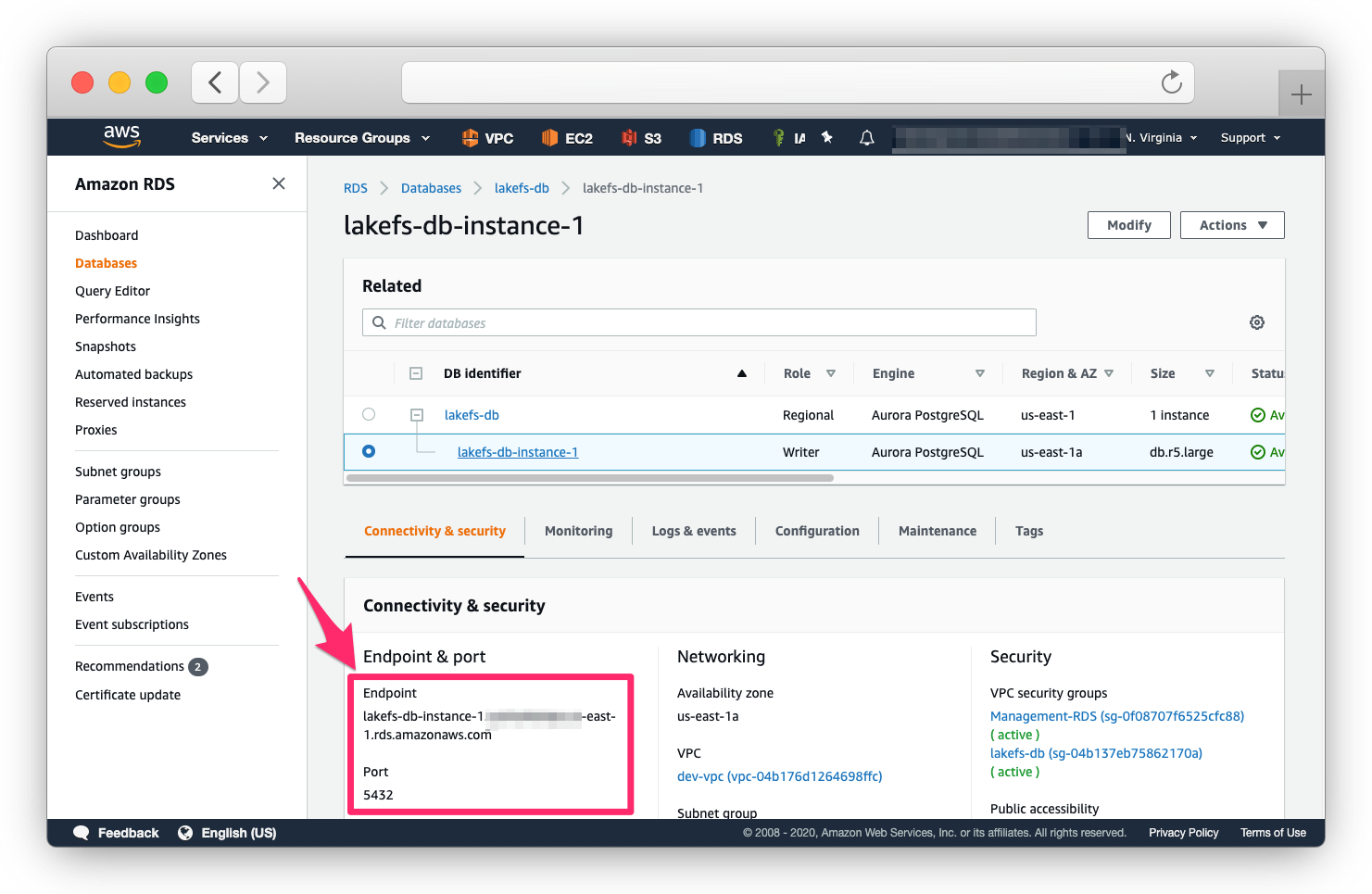

Once your RDS is set up and the server is in

Availablestate, take note of the endpoint and port.

- Make sure your security group rules allow you to connect to the database instance.

DynamoDB on AWS

DynamoDB on AWS does not require any specific preparation, other than properly configuring lakeFS to use it and valid AWS credentials. Please refer to database.dynamodb section in the configuration reference for complete configuration options.

AWS credentials, for DynamoDB, can also be provided via environment variables, as described in the configuration reference

Please refer to AWS documentation for further information on DynamoDB

Installation Options

On EC2

-

Edit and save the following configuration file as

config.yaml:--- database: type: "postgres" OR "dynamodb" # when using dynamodb dynamodb: table_name: "[DYNAMODB_TABLE_NAME]" aws_region: "[DYNAMODB_REGION]" # when using postgres postgres: connection_string: "[DATABASE_CONNECTION_STRING]" auth: encrypt: # replace this with a randomly-generated string: secret_key: "[ENCRYPTION_SECRET_KEY]" blockstore: type: s3 s3: region: us-east-1 # optional, fallback in case discover from bucket is not supported - Download the binary to the EC2 instance.

- Run the

lakefsbinary on the EC2 instance:lakefs --config config.yaml runNote: It’s preferable to run the binary as a service using systemd or your operating system’s facilities.

On ECS

To support container-based environments like AWS ECS, lakeFS can be configured using environment variables. Here are a couple of docker run

commands to demonstrate starting lakeFS using Docker:

With PostgreSQL

docker run \

--name lakefs \

-p 8000:8000 \

-e LAKEFS_DATABASE_TYPE="postgres" \

-e LAKEFS_DATABASE_POSTGRES_CONNECTION_STRING="[DATABASE_CONNECTION_STRING]" \

-e LAKEFS_AUTH_ENCRYPT_SECRET_KEY="[ENCRYPTION_SECRET_KEY]" \

-e LAKEFS_BLOCKSTORE_TYPE="s3" \

treeverse/lakefs:latest run

With DynamoDB

docker run \

--name lakefs \

-p 8000:8000 \

-e LAKEFS_DATABASE_TYPE: "dynamodb" \

-e LAKEFS_DATABASE_DYNAMODB_TABLE_NAME="[DYNAMODB_TABLE_NAME]" \

-e LAKEFS_AUTH_ENCRYPT_SECRET_KEY="[ENCRYPTION_SECRET_KEY]" \

-e LAKEFS_BLOCKSTORE_TYPE="s3" \

treeverse/lakefs:latest run

See the reference for a complete list of environment variables.

On EKS

Load balancing

Depending on how you chose to install lakeFS, you should have a load balancer direct requests to the lakeFS server.

By default, lakeFS operates on port 8000, and exposes a /_health endpoint which you can use for health checks.

Notes for using an AWS Application Load Balancer

- Your security groups should allow the load balancer to access the lakeFS server.

- Create a target group with a listener for port 8000.

- Setup TLS termination using the domain names you wish to use (e.g.,

lakefs.example.comand potentiallys3.lakefs.example.com,*.s3.lakefs.example.comif using virtual-host addressing). - Configure the health-check to use the exposed

/_healthURL

Next Steps

Your next step is to prepare your storage. If you already have a storage bucket/container, you’re ready to create your first lakeFS repository.